Search by meaning,

not just keywords.

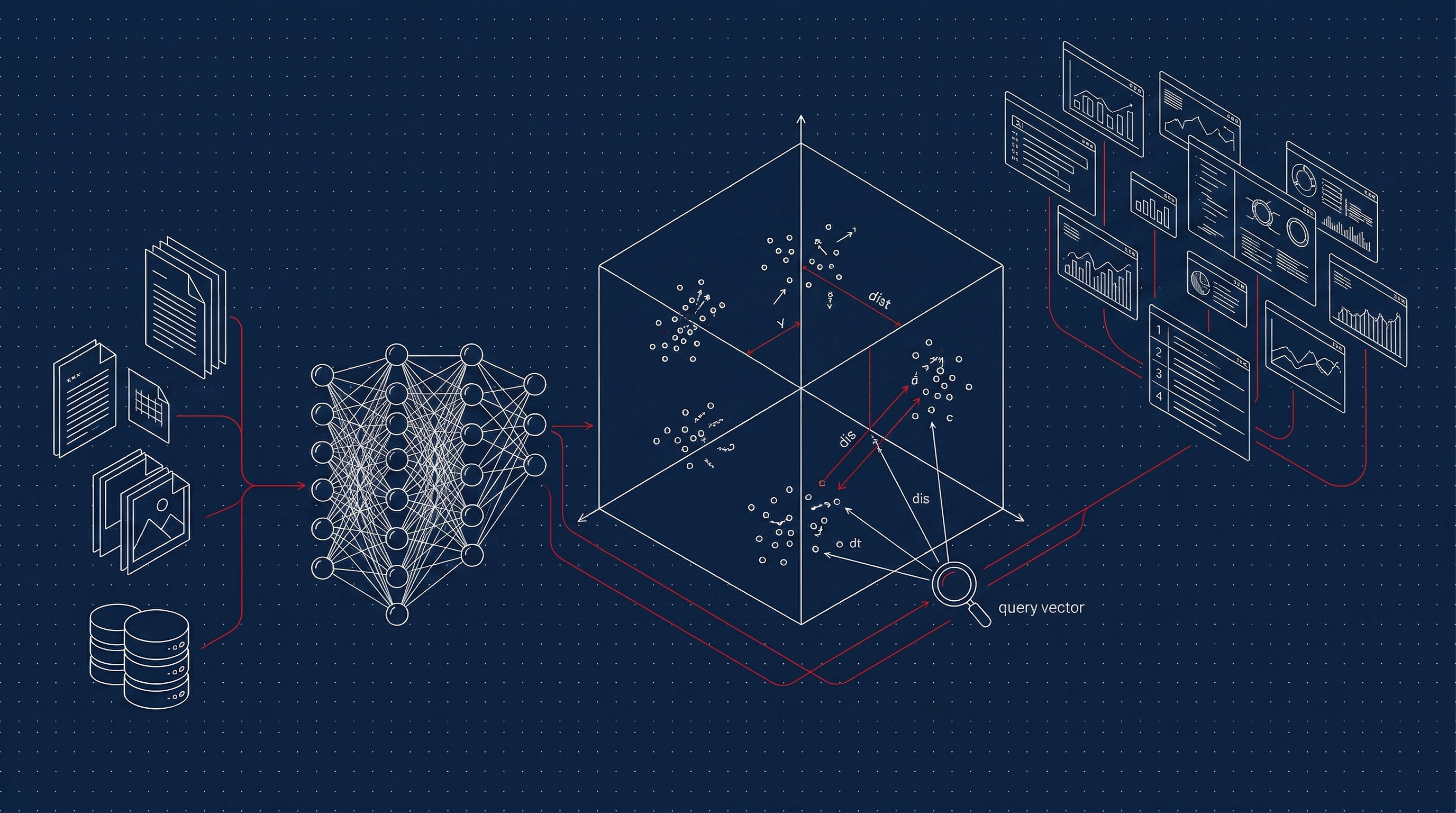

State-of-the-art embedding models and vector search that turn your manufacturing data into a semantic intelligence layer. Find similar jobs, surface tribal knowledge, and power RAG pipelines — all from the meaning in your data. The infrastructure that gives American manufacturers a genuine AI advantage.